Recurrent Neural Networks (Deep Learning Notes C5W1A)

These are some notes for the Coursera Course on Sequence Models, which is the last part of the Deep Learning Specialization. This post is a summary of the course contents that I learned from Week 1.

I have split the notes for Week 1 into two parts. This is the first part which covers the basics of RNN, and the next post will cover LSTM and GRU networks, two specialized type of RNN that are capable of learning long-range dependencies in time series data.

These notes are far from original. All credits go to the course instructor Andrew Ng and his team.

Sequence models allow AI systems to understand and make predictions based on time-series data. The order of the data points in such tasks is important for generate outputs. Examples are natural language processing, speech recognition, and music generation.

Notations and Representation of Words

Suppose we want the model to analyse a sentence and identify the names in it, so the inputs are the words and the outputs are binary that tell if each word is a name or not. For example, if the input sentence is:

\[\text{Harry Potter and Hermonie Granger invented a new spell.}\]Then the expected output of the named-entity recognition task is:

\[1 \quad 1\quad 0 \quad 1 \quad 1 \quad 0 \quad 0 \quad 0 \quad 0\]To represent each individual word in the input sequence, we can choose a dictionary containing all the words of interest, and then use one-hot representation for each word. If we encounter a word that is not in the vocabulary, we can represent the unknown work with the <UNK> token.

For such chronologically ordered data, we may index the inputs and outputs as

\[\mathbf{x}^{(i)\langle t \rangle} \qquad \hat{\mathbf{y}}^{(i)\langle t \rangle}\]-

Superscript \((i)\) denotes an object associated with the \(i^\text{th}\) training example.

-

Superscript \(\langle t \rangle\) denotes an object at the \(t^\text{th}\) time step.

-

Superscript \([l]\) denotes an object associated with the \(l^\text{th}\) layer.

-

Subscript \(j\) denotes the \(j^\text{th}\) entry of a vector.

Recurrent Neural Networks

Why Not Standard Neural Networks?

Problems with a standard neural network for sequential tasks include:

-

Inputs and outputs may be different in lengths.

This can be solved by padding with the maximum length, but it is not always a good solution.

-

Features learned across different positions of the sequence are not shared.

For example, in name entity recognition tasks, a person’s name reappearing in a different position simply means the same word is also a name.

-

The length of the inputs can be very big, depending on the size of the dictionary, so the network can have a huge number of parameters.

Features of Recurrent Neural Networks

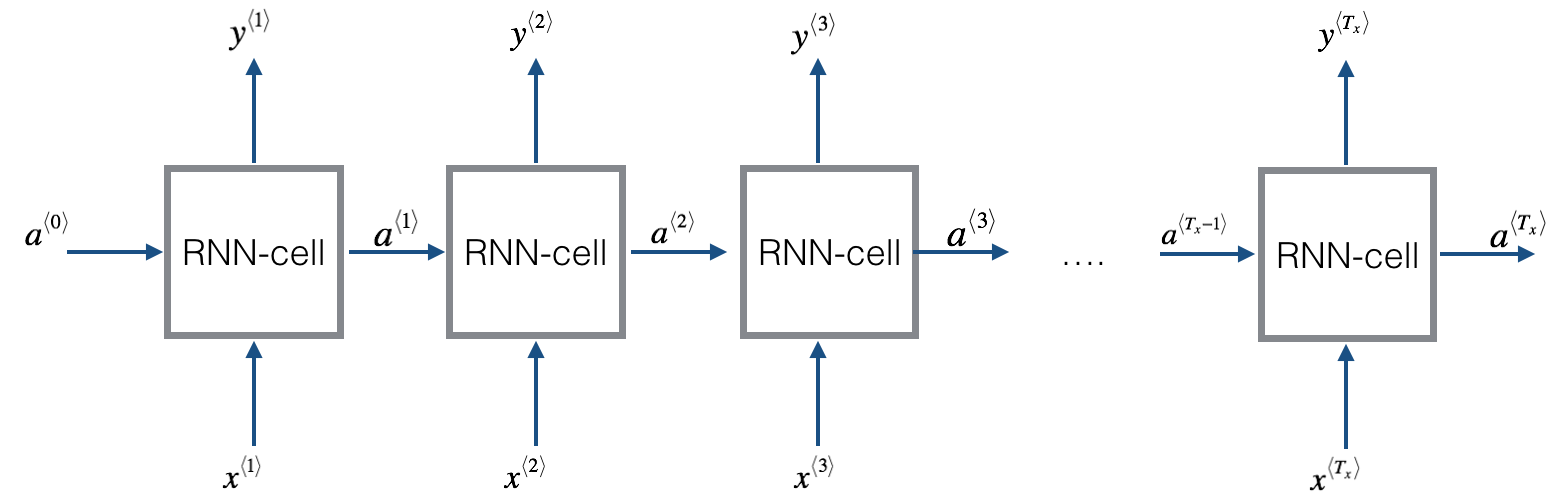

Recurrent Neural Networks (RNNs) effective for natural language processing and other sequence tasks because they have “memory”. They do so by maintaining a hidden state \(\mathbf{a}^{\langle t \rangle}\) that stores information from previous steps. Instead of processing each input independently, RNNs use recurrent connections to pass information from one step to the next. RNNs process one word at a time step, update the corresponding hidden state up to that point. This allows an RNN to process sequences of arbitrary lengths.

The discussions above apply to what we call a uni-directional (one-way) RNN, which means that the RNN can take information from the past to process later inputs, i.e., only forward activations are used to compute the output:

\[\hat{\mathbf{y}}^{\langle t \rangle} = g(\mathbf{W}_{ya} \mathbf{a}^{\langle t \rangle} + \mathbf{b}_y)\]It is also possible to build bidirectional (two-way) RNNs to take context from both the past and the future. In this case, both the forward and backward activations are used to compute the output:

\[\hat{\mathbf{y}}^{\langle t \rangle} = g(\mathbf{W}_{ya} [\mathbf{a}^{\langle t \rangle}, \mathbf{a'}^{\langle t \rangle} ] + \mathbf{b}_y)\]Another key mechanism in RNNs is the repeated use of the same set of weight parameters across all time steps. At each time step, the network takes two inputs: the current data point \(\mathbf{x}^{\langle t \rangle}\) (such as words) and the hidden state from the previous step \(\mathbf{a}^{\langle t - 1\rangle}\). These two sets of inputs are combined using the same weights and activation functions to produce a new hidden state and an output. Such parameter sharing makes RNNs more efficient than standard neural networks for sequential tasks.

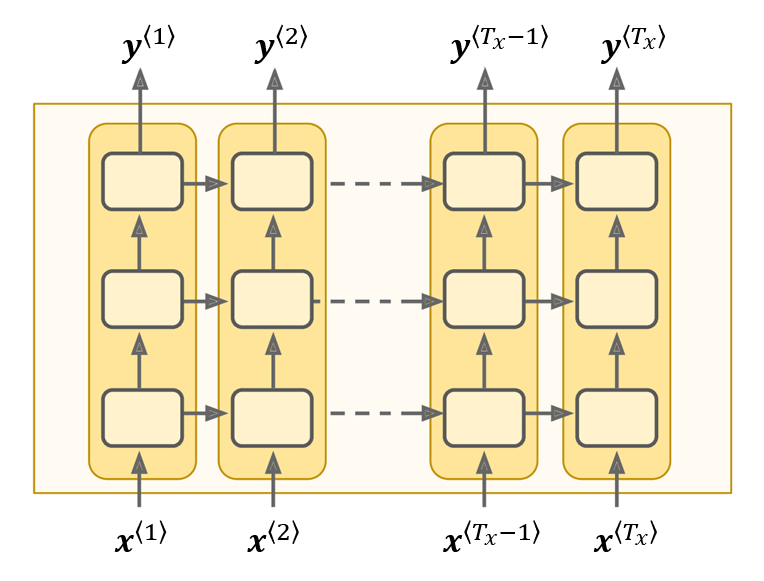

For learning very complex functions, it is useful to stack multiple layers of RNNs to build deeper versions of these models. For each time step, we can stack many hidden layers and build lateral connections between them. A deep RNN with three layers would look like this:

Types of RNNs

So far we are focussing an RNN architecture such that size of the input sequence \(T_x\) is equal to the size of the output sequence \(T_y\). But in many problems, \(T_x\) and \(T_y\) can be different, leading to the need of different architectures.

- Many to many, like machine translation (would need two parts, an encoder and a decoder)

- Many to one, like sentiment analysis (input a sequence and output a sentiment such as positive, negative or neutral)

- One to many, like music generation (input one note and the network generates a series of music notes, and reuses the outputs as new inputs to generate further music notes)

Problems with RNNs

One of the major issue with RNNs is the vanishing and exploding gradient problem. We can have very long dependencies, like in the sentence “the person/people who …… is/are “, depending on the subject being singular or plural, the network needs to output the correct prediction much later in the sequence.

To learn such long dependencies, the gradient needs to backpropagate over very large number of steps to affect the earlier layers. But this is not always possible because of the problem of vanishing and exploding gradients. Therefore, we only have local dependencies in RNNs, where each word only depend on a limited number of words preceding it.

For exploding gradient we can apply gradient clipping. That is to clip any overly large values of gradients according to some threshold value whenever they appear to explode during forward propagation.

Vanishing gradient is a much trickier problem. It could be mitigated with better weight initialisation schemes, and new architectures such as LSTM/GRU networks that we will discuss later on.

Basic RNN Cells

Forward Propagation

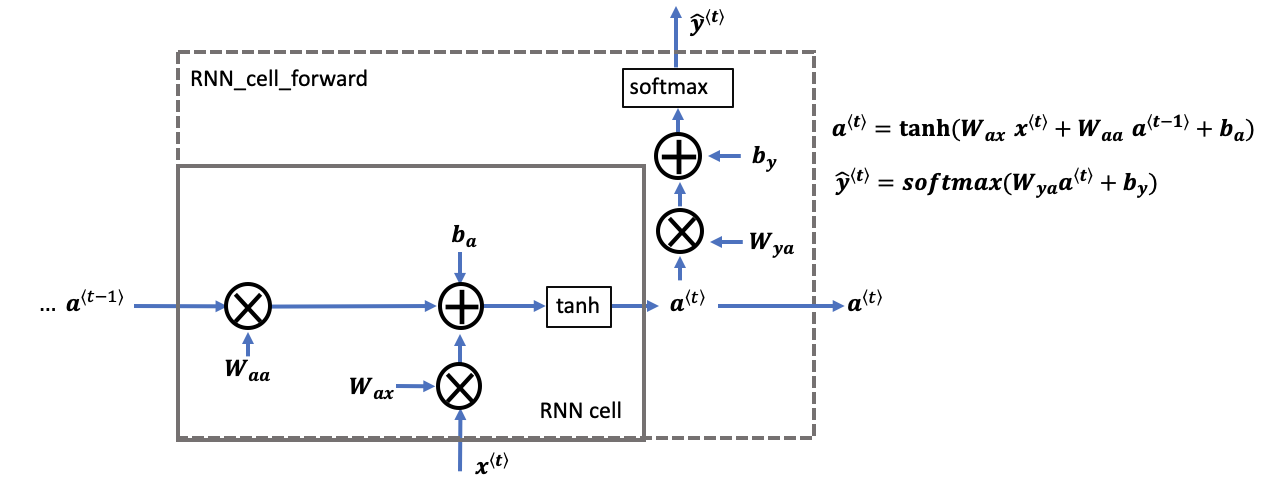

We can think of the RNN as the repeated use of a single cell. The following figure describes the operations during a single time step of a basic RNN cell.

Each basic RNN cell takes as input \(\mathbf{x}^{\langle t \rangle}\) (input from the current time step) and \(\mathbf{a}^{\langle t - 1\rangle}\) (previous hidden state that contains information from the past time steps), and outputs a prediction \(\hat{\mathbf{y}}^{\langle t \rangle}\) for the current time step together with a new hidden state \(\mathbf{a}^{\langle t \rangle}\) which is passed on to the next RNN cells.

\[\mathbf{a}^{\langle t \rangle} = \tanh(\mathbf{W}_{aa} \mathbf{a}^{\langle t-1 \rangle} + \mathbf{W}_{ax} \mathbf{x}^{\langle t \rangle} + \mathbf{b}_a) \\ \hat{\mathbf{y}}^{\langle t \rangle} = \text{softmax}(\mathbf{W}_{ya} \mathbf{a}^{\langle t \rangle} + \mathbf{b}_y)\]Note that the hyperbolic \(\tanh\) is chosen as the activation function so that \(\mathbf{a}^{\langle t \rangle}\) is always a tensor containing values within the range \((-1, 1)\).

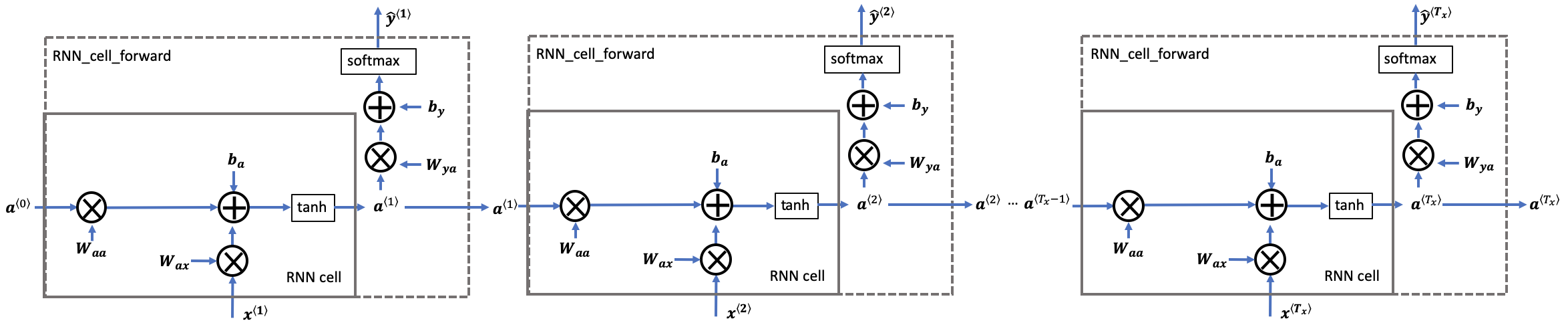

A recurrent neural network (RNN) is a repetition of the basic RNN cells, where the weights and biases \((\mathbf{W}_{aa}, \mathbf{W}_{ax}, \mathbf{b}_{a}, \mathbf{W}_{ay}, \mathbf{b}_{y})\) are reused at each time step.

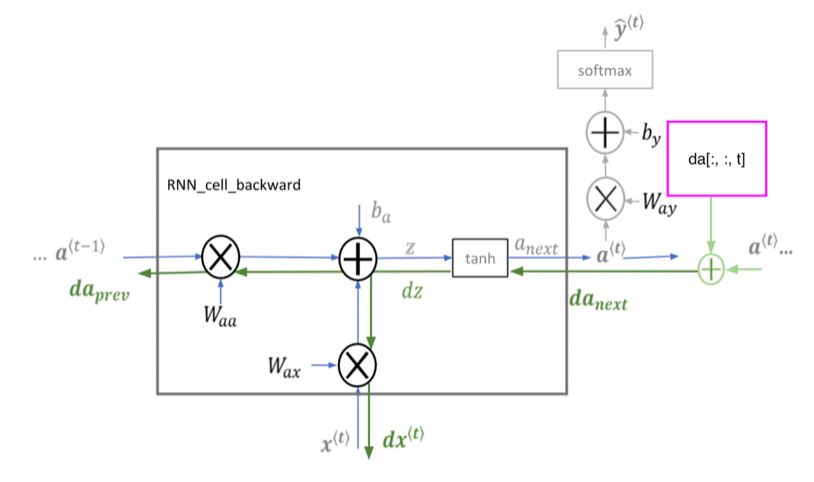

Backward Propagation

For convenience, let’s introduce the pre-activation variable:

\[\mathbf{z}^{\langle t \rangle} = \mathbf{W}_{ax} \mathbf{x}^{\langle t \rangle} + \mathbf{W}_{aa} \mathbf{a}^{\langle t-1 \rangle} + \mathbf{b}_{a}\]We can then write the output of the basic cell as:

\[\mathbf{a}^{\langle t \rangle} = \tanh(\mathbf{z}^{\langle t \rangle})\]We start deriving the partial derivatives of the cost functions with the following:

\[\begin{align*} d \tanh = \frac{\partial J}{\partial \mathbf{z}^{\langle t \rangle}} &= \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}} \frac{\partial \mathbf{a}^{\langle t \rangle}}{\partial \mathbf{z}^{\langle t \rangle}} \\ & = d \mathbf{a}^{\langle t \rangle} * \left( 1 - \tanh^2 (\mathbf{z}^{\langle t \rangle}) \right) \\ \Rightarrow d \tanh &= d \mathbf{a}^{\langle t \rangle} * \left( 1 - (\mathbf{a}^{\langle t \rangle})^2 \right) \end{align*}\]Here I am using the notation from Andrew’s course, but I personally find it more pleasing to change \(d \tanh\) into \(d \mathbf{z}^{\langle t \rangle}\). Anyway, we may then proceed to find the partial derivatives with respect to the weights, biases and outputs from the previous layer:

\[\begin{align*} d \mathbf{W}_{ax} &= \frac{\partial J}{\partial \mathbf{z}^{\langle t \rangle}} \frac{\partial \mathbf{z}^{\langle t \rangle}}{\partial \mathbf{W}_{ax}} = d \tanh \cdot {\mathbf{x}^{\langle t \rangle}}^T \\ d\mathbf{W}_{aa} &= \frac{\partial J}{\partial \mathbf{z}^{\langle t \rangle}} \frac{\partial \mathbf{z}^{\langle t \rangle}}{\partial \mathbf{W}_{aa}} = d\tanh \cdot {\mathbf{a}^{\langle t-1 \rangle}}^T \\ d\mathbf{b}_a & = \frac{\partial J}{\partial \mathbf{u}^{\langle t \rangle}} \frac{\partial \mathbf{z}^{\langle t \rangle}}{\partial \mathbf{b}_{a}} = \sum_\text{batch}d\tanh \\ d\mathbf{x}^{\langle t \rangle} &= \frac{\partial J}{\partial \mathbf{z}^{\langle t \rangle}} \frac{\partial \mathbf{z}^{\langle t \rangle}}{\partial \mathbf{x}^{\langle t \rangle}} = {\mathbf{W}_{ax}}^T \cdot d\tanh \\ d\mathbf{a}^{\langle t-1 \rangle} &= \frac{\partial J}{\partial \mathbf{z}^{\langle t \rangle}} \frac{\partial \mathbf{z}^{\langle t \rangle}}{\partial \mathbf{a}^{\langle t-1 \rangle}} = { \mathbf{W}_{aa}}^T \cdot d\tanh \end{align*}\]We may check that these parameter gradients make sense by checking the dimensions of the vectors and tensors that appear on both sides:

\[\begin{align*} d\mathbf{W}_{ax}: \quad & (n_a \times n_x) = (n_a \times 1) \cdot (1\times n_x) \\ d\mathbf{W}_{aa} : \quad & (n_a \times n_a) = (n_a \times 1) \cdot (1\times n_a) \\ d\mathbf{b}_a : \quad & (n_a \times 1) = (n_a \times 1) \\ d\mathbf{x}^{\langle t \rangle} : \quad & (n_x \times 1) = (n_x \times n_a) \cdot (n_a\times 1) \\ d\mathbf{a}^{\langle t-1 \rangle} : \quad & (n_a \times 1) = (n_a \times n_a) \cdot (n_a\times 1) \end{align*}\]