LSTM and GRU Networks (Deep Learning Notes C5W1B)

These are some notes for the Coursera Course on Sequence Models, which is the last part of the Deep Learning Specialization. This post is a summary of the course contents that I learned from Week 1.

I have split the notes for Week 1 into two parts. This is the second part that covers LSTM and GRU networks, two specialized type of RNN that are able to mitigate vanishing gradient problems encountered by traditional RNNs.

These notes are far from original. All credits go to the course instructor Andrew Ng and his team.

Long Short-Term Memory (LSTM) Networks: Forward Propagation

Long Short-Term Memory (LSTM) networks are a specialized type of RNN designed to learn long-range dependencies in sequential data, solving the vanishing gradient problem encountered by traditional RNNs. They use a gating mechanism to selectively remember or forget information over long time steps, making them ideal for learning time series data.

An LSTM is similar to an RNN in that they both use hidden states to pass along information. In addition to hidden states \(\mathbf{a}^{\langle t \rangle}\), an LSTM network tracks and updates a cell state, or memory variable, \(\mathbf{c}^{\langle t \rangle}\) at every time step, which can be different from \(\mathbf{a}^{\langle t \rangle}\). Each \(\mathbf{c}^{\langle t \rangle}\) provides a bit of extra memory to remember and is passed on along the network.

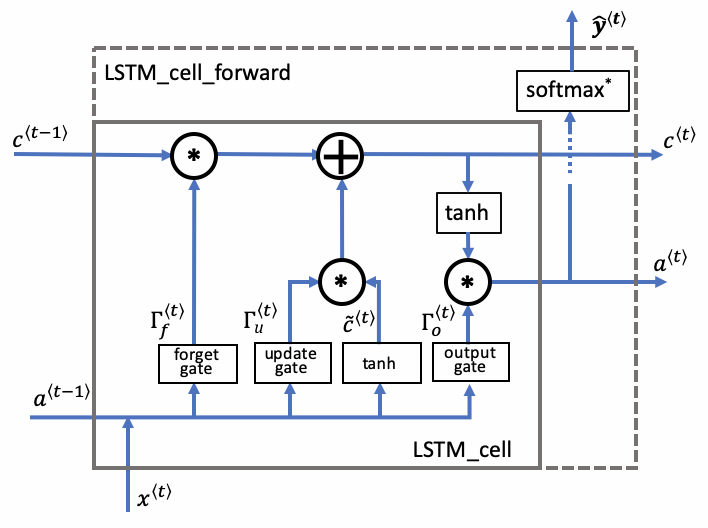

The key ingredients of a LSTM cell include

- a cell state \(\mathbf{c}^{\langle t \rangle}\), which is like a long-term memory

- a hidden state \(\mathbf{a}^{\langle t \rangle}\), which is like a short-term memory

- three gates (forget gate, update gate and output gate), that regulate what information to be stored or discarded depending on the relevancy of the inputs

The following figure illustrates the operations for a single time step of an LSTM cell.

Forget gate \(\mathbf{\Gamma}_{f}\)

\[\mathbf{\Gamma}_f^{\langle t \rangle} = \sigma(\mathbf{W}_f[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_f)\]The forget gate is a tensor containing values between 0 and 1. To calculate this tensor, we first take a linear combination of \(\mathbf{a}^{\langle t-1 \rangle}\) (hidden state of the previous time step) and \(\mathbf{x}^{\langle t \rangle}\) (input of the current time step), and then apply a sigmoid function to make each of the tensor’s values range from 0 to 1 as desired.

The forget gate helps us keep track of grammatical structures such as whether the subject in a piece of text is singular or plural. If the subject changes its state, say it changes from a singular word to a plural word, then the memory of the previous state becomes outdated, the unit in the forget gate will get a value close to 0 and the LSTM will forget the outdated state \(\mathbf{c}^{\langle t-1 \rangle}\) that has been stored in the previous cell. If the unit the forget state gets a value close to 1, the LSTM will mostly remember the store value in the corresponding unit \(\mathbf{c}^{\langle t-1 \rangle}\).

Candidate value \(\tilde{\mathbf{c}}^{\langle t \rangle}\)

\[\mathbf{\tilde{c}}^{\langle t \rangle} = \tanh\left( \mathbf{W}_{c} [\mathbf{a}^{\langle t - 1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{c} \right)\]The candidate value is a tensor containing values between \(-1\) and \(1\), which is guaranteed by the range of the \(\tanh\) function.

The candidate value \(\tilde{\mathbf{c}}^{\langle t \rangle}\) contains information from the current time step that may be stored in the current cell state \(\mathbf{c}^{\langle t \rangle}\). Some part of the candidate value \(\tilde{\mathbf{c}}^{\langle t \rangle}\) gets passed on to \(\mathbf{c}^{\langle t \rangle}\), depending on the update gate \(\mathbf{\Gamma}_u^{\langle t \rangle}\).

Update gate \(\mathbf{\Gamma}_{u}\)

\[\mathbf{\Gamma}_u^{\langle t \rangle} = \sigma(\mathbf{W}_u[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_u)\]Similar to the forget gate, the update gate produces values between 0 and 1.

The update date decides which part of the candidate tensor \(\tilde{\mathbf{c}}^{\langle t \rangle}\) gets passed onto the new cell state \(\mathbf{c}^{\langle t \rangle}\). When a unit in the update gate is close to 1, it allows the value of the candidate \(\tilde{\mathbf{c}}^{\langle t \rangle}\) to be passed onto the cell state \(\mathbf{c}^{\langle t \rangle}\). When a unit in the update gate is close to 0, it prevents the corresponding value in the candidate from being passed on.

Cell state \(\mathbf{c}^{\langle t \rangle}\)

\[\mathbf{c}^{\langle t \rangle} = \mathbf{\Gamma}_f^{\langle t \rangle}* \mathbf{c}^{\langle t-1 \rangle} + \mathbf{\Gamma}_{u}^{\langle t \rangle} * \mathbf{\tilde{c}}^{\langle t \rangle}\]The new cell state \(\mathbf{c}^{\langle t \rangle}\) is a linear combination of the previous cell state \(\mathbf{c}^{\langle t-1 \rangle}\) and the candidate value \(\mathbf{\tilde{c}}^{\langle t \rangle}\). The weights of this linear combination are exactly the forget gate \(\mathbf{\Gamma}_{f}^{\langle t \rangle}\) and the update gate \(\mathbf{\Gamma}_{u}^{\langle t \rangle}\) that we have introduced above.

We can think of the cell state \(\mathbf{c}^{\langle t \rangle}\) as the long-term memory that gets passed on to future time steps.

Output gate \(\mathbf{\Gamma}_{o}\)

\[\mathbf{\Gamma}_o^{\langle t \rangle}= \sigma(\mathbf{W}_o[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{o}) \\ \mathbf{a}^{\langle t \rangle} = \mathbf{\Gamma}_o^{\langle t \rangle} * \tanh(\mathbf{c}^{\langle t \rangle})\]Like the other gates, values of the output gate are always between 0 to 1.

The output gate decides what fraction of the cell state gets exposed as the hidden state \(\mathbf{a}^{\langle t \rangle}\) of the current time step.

Prediction \(\mathbf{y}^{\langle t \rangle}_\text{pred}\)

\[\mathbf{y}^{\langle t \rangle}_\text{pred} = \text{softmax}(\mathbf{W}_{y} \mathbf{a}^{\langle t \rangle} + \mathbf{b}_{y})\]The prediction in this our case is a classification, so a softmax function is used.

Summary

In a nutshell, the algebraic operations for the forward pass are:

- Forget gate: \(\mathbf{\Gamma}_f^{\langle t \rangle} = \sigma(\mathbf{W}_f[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_f)\)

- Candidate value: \(\mathbf{\tilde{c}}^{\langle t \rangle} = \tanh\left( \mathbf{W}_{c} [\mathbf{a}^{\langle t - 1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{c} \right)\)

- Update gate: \(\mathbf{\Gamma}_u^{\langle t \rangle} = \sigma(\mathbf{W}_u[\mathbf{a}^{\langle t - 1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_u)\)

- Cell state: \(\mathbf{c}^{\langle t \rangle} = \mathbf{\Gamma}_f^{\langle t \rangle}* \mathbf{c}^{\langle t-1 \rangle} + \mathbf{\Gamma}_{u}^{\langle t \rangle} *\mathbf{\tilde{c}}^{\langle t \rangle}\)

- Output gate: \(\mathbf{\Gamma}_o^{\langle t \rangle}= \sigma(\mathbf{W}_o[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{o})\)

- Hidden state: \(\mathbf{a}^{\langle t \rangle} = \mathbf{\Gamma}_o^{\langle t \rangle} * \tanh(\mathbf{c}^{\langle t \rangle})\)

- Prediction: \(\mathbf{y}^{\langle t \rangle}_\text{pred} = \text{softmax}(\mathbf{W}_{y} \mathbf{a}^{\langle t \rangle} + \mathbf{b}_{y})\)

Long Short-Term Memory (LSTM) Networks: Backward Propagation

In this section, we give a step-by-step derivation for the derivatives for backward propagation at the level of each individual gate.

Gate Derivatives

For convenience, let’s introduce pre-activation variables

\[\begin{align*} \mathbf{z}_o^{\langle t \rangle} &= \mathbf{W}_o[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{o} \\ \mathbf{z}_u^{\langle t \rangle} &= \mathbf{W}_u[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{u} \\ \mathbf{z}_f^{\langle t \rangle} &= \mathbf{W}_f[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{f} \\ \mathbf{\tilde{z}}^{\langle t \rangle} &= \mathbf{W}_{c} [\mathbf{a}^{\langle t - 1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{c} \end{align*}\]So the gate values and the candidate values can be expressed nicely as

- Output gate: \(\mathbf{\Gamma}_o^{\langle t \rangle}= \sigma(\mathbf{z}_o^{\langle t \rangle})\)

- Candidate value: \(\mathbf{\tilde{c}}^{\langle t \rangle} = \tanh\left(\mathbf{\tilde{z}}^{\langle t \rangle} \right)\)

- Update gate: \(\mathbf{\Gamma}_u^{\langle t \rangle}= \sigma(\mathbf{z}_u^{\langle t \rangle})\)

- Forget gate: \(\mathbf{\Gamma}_f^{\langle t \rangle} = \sigma(\mathbf{z}_f^{\langle t \rangle})\)

Derivatives for the output gate is easy to find. Recall that for sigmoid function \(\displaystyle \sigma(z) = \frac{1}{1 + e^{-z}}\), we have \(\sigma'(z) = \sigma(z)(1-\sigma(z))\). So we have:

\[\begin{align*} d \mathbf{z}_o^{\langle t \rangle} &= \frac{\partial J}{\partial \mathbf{z}_o^{\langle t \rangle}} = \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}} \frac{\partial \mathbf{a}^{\langle t \rangle}}{\partial \mathbf{\Gamma}_o^{\langle t \rangle}} \frac{\partial \mathbf{\Gamma}_o^{\langle t \rangle}}{\partial \mathbf{z}_o^{\langle t \rangle}} \\ \Rightarrow d \mathbf{z}_o^{\langle t \rangle} & = d \mathbf{a}^{\langle t \rangle} * \tanh(\mathbf{c}^{\langle t \rangle}) * \mathbf{\Gamma}_o^{\langle t \rangle} * \left(1 - \mathbf{\Gamma}_o^{\langle t \rangle} \right) \end{align*}\]For the other gate derivatives, note that both the forget gate and the update gate participate in the computation of the cell state: \(\mathbf{c}^{\langle t \rangle} = \mathbf{\Gamma}_f^{\langle t \rangle}* \mathbf{c}^{\langle t-1 \rangle} + \mathbf{\Gamma}_{u}^{\langle t \rangle} *\mathbf{\tilde{c}}^{\langle t \rangle}\). This means that when we compute these gate derivatives, there are contributions from two sources: the loss both at the current time step and also from the future time steps. Due to such recurrence structure in \(\mathbf{c}^{\langle t \rangle}\), the full gradient of the cell state is:

\[\begin{align*} \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}} &= \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}}\bigg|_{\text{local}} + \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}}\bigg|_{\text{future}} \\ &= \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}} \frac{\partial \mathbf{a}^{\langle t \rangle}}{\partial \mathbf{c}^{\langle t \rangle}} + \frac{\partial J}{\partial \mathbf{c}^{\langle t+1 \rangle}} \frac{\partial \mathbf{c}^{\langle t+1 \rangle}}{\partial \mathbf{c}^{\langle t \rangle}} \end{align*}\]With \(\mathbf{a}^{\langle t \rangle} = \mathbf{\Gamma}_o^{\langle t \rangle} * \tanh(\mathbf{c}^{\langle t \rangle})\) and \(\mathbf{c}^{\langle t+1 \rangle} = \mathbf{\Gamma}_f^{\langle t+1 \rangle}* \mathbf{c}^{\langle t \rangle} + \mathbf{\Gamma}_{u}^{\langle t+1 \rangle} *\mathbf{\tilde{c}}^{\langle t+1 \rangle}\), we further obtain

\[d \mathbf{c}^{\langle t \rangle} = d\mathbf{a}^{\langle t \rangle} * \mathbf{\Gamma}_o^{\langle t \rangle} * \left(1 - \tanh^2(\mathbf{c}^{\langle t \rangle}) \right) + d\mathbf{c}^{\langle t+1 \rangle} * \mathbf{\Gamma}_f^{\langle t+1 \rangle}\]In implementation, we can introduce the passed-back variable \(d\mathbf{c}_\text{prev}^{\langle t \rangle} = d\mathbf{c}^{\langle t+1 \rangle}* \mathbf{\Gamma}_f^{\langle t+1 \rangle}\) at time step \(t+1\), so at time step \(t\) we receive a single tensor \(d\mathbf{c}_\text{prev}^{\langle t \rangle}\) with the \(\mathbf{\Gamma}_f^{\langle t+1 \rangle}\) factor already pre-multiplied, and we use that to compute the full gradient \(d\mathbf{c}^{\langle t \rangle}\) at time step \(t\), i.e.,

\[\frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}} = d\mathbf{a}^{\langle t \rangle} * \mathbf{\Gamma}_o^{\langle t \rangle} * \left(1 - \tanh^2(\mathbf{c}^{\langle t \rangle}) \right) + d\mathbf{c}_\text{prev}^{\langle t \rangle}\]Now, for the candidate value:

\[\begin{align*} d \mathbf{z}_c^{\langle t \rangle} &= \frac{\partial J}{\partial \mathbf{z}_c^{\langle t \rangle} } = \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}} \frac{\partial \mathbf{c}^{\langle t \rangle}}{\partial \mathbf{\tilde{c}}^{\langle t \rangle} } \frac{\partial \mathbf{\tilde{c}}^{\langle t \rangle}}{\partial \mathbf{z}_c^{\langle t \rangle} } \\ & = \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}} * \mathbf{\Gamma}_u^{\langle t \rangle} * \left( 1- \tanh^2 (\mathbf{z}_c^{\langle t \rangle}) \right)\\ \Rightarrow d \mathbf{z}_c^{\langle t \rangle} & = d \mathbf{c}^{\langle t \rangle} * \mathbf{\Gamma}_u^{\langle t \rangle} * \left( 1- (\mathbf{\tilde{c}}^{\langle t \rangle})^2 \right) \end{align*}\]Derivatives for the update gate follows similarly:

\[\begin{align*} d \mathbf{z}_u^{\langle t \rangle} &= \frac{\partial J}{\partial \mathbf{z}_u^{\langle t \rangle}} = \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}} \frac{\partial \mathbf{c}^{\langle t \rangle}}{\partial \mathbf{\Gamma}_u^{\langle t \rangle}} \frac{\partial \mathbf{\Gamma}_u^{\langle t \rangle}}{\partial \mathbf{z}_u^{\langle t \rangle}} \\ & = \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}} * \mathbf{\tilde{c}}^{\langle t \rangle} * \left[ \sigma(\mathbf{z}_u^{\langle t \rangle}) * (1-\sigma(\mathbf{z}_u^{\langle t \rangle}))\right] \\ \Rightarrow d \mathbf{z}_u^{\langle t \rangle} &= d \mathbf{c}^{\langle t \rangle} * \mathbf{\tilde{c}}^{\langle t \rangle} *\mathbf{\Gamma}_u^{\langle t \rangle} * \left(1 - \mathbf{\Gamma}_u^{\langle t \rangle} \right) \end{align*}\]Also, derivative for the forget gate is almost identical:

\[\begin{align*} d \mathbf{z}_f^{\langle t \rangle} &= \frac{\partial J}{\partial \mathbf{z}_f^{\langle t \rangle}} = \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}} \frac{\partial \mathbf{c}^{\langle t \rangle}}{\partial \mathbf{\Gamma}_f^{\langle t \rangle}} \frac{\partial \mathbf{\Gamma}_f^{\langle t \rangle}}{\partial \mathbf{z}_f^{\langle t \rangle}} \\ \Rightarrow d \mathbf{z}_f^{\langle t \rangle} &= d \mathbf{c}^{\langle t \rangle} * \mathbf{c}^{\langle t-1 \rangle} *\mathbf{\Gamma}_f^{\langle t \rangle} * \left(1 - \mathbf{\Gamma}_f^{\langle t \rangle} \right) \end{align*}\]Parameter Derivatives

Derivations for the parameter derivatives are straightforward:

\[\begin{align*} d\mathbf{W}_* &= d\mathbf{z}_*^{\langle t \rangle} \begin{bmatrix} \mathbf{a}^{\langle t - 1 \rangle} \\ \mathbf{x}^{\langle t \rangle} \end{bmatrix}^T \\ d\mathbf{b}_* &= \sum_\text{batch} d\mathbf{z}_*^{\langle t \rangle} \end{align*}\]where the subscript “”\(*\)”” stands for \(f, u, o, c\) in turn.

Derivatives with Respect to Previous States

The derivatives with respect to the previous hidden state, the previous memory state and the input are:

\[\begin{align*} d\mathbf{a}^{\langle t - 1 \rangle} &= \mathbf{W}_f^T d\mathbf{z}_f^{\langle t \rangle} + \mathbf{W}_u^T d\mathbf{z}_u^{\langle t \rangle} + \mathbf{W}_c^T d\mathbf{z}_c^{\langle t \rangle} + \mathbf{W}_o^T d\mathbf{z}_o^{\langle t \rangle} \\ d\mathbf{c}_\text{prev}^{\langle t - 1 \rangle} &= \frac{\partial J}{\partial \mathbf{c}^{\langle t \rangle}} \frac{\partial \mathbf{c}^{\langle t \rangle}}{\partial \mathbf{c}^{\langle t-1 \rangle}} = d \mathbf{c}^{\langle t \rangle} * \mathbf{\Gamma}_f^{\langle t \rangle} \end{align*}\]Note that the \(d\mathbf{c}_\text{prev}^{\langle t - 1 \rangle}\) being computed here is not the full derivative but the passed-back variable that only takes into account the future contributions the loss function. As we have discussed earlier, this future term needs to be combined with the local terms to assemble the full gradient \(d\mathbf{c}^{\langle t-1 \rangle}\).

Gated Recurrent Unit (GRU) Networks: Forward Propagation

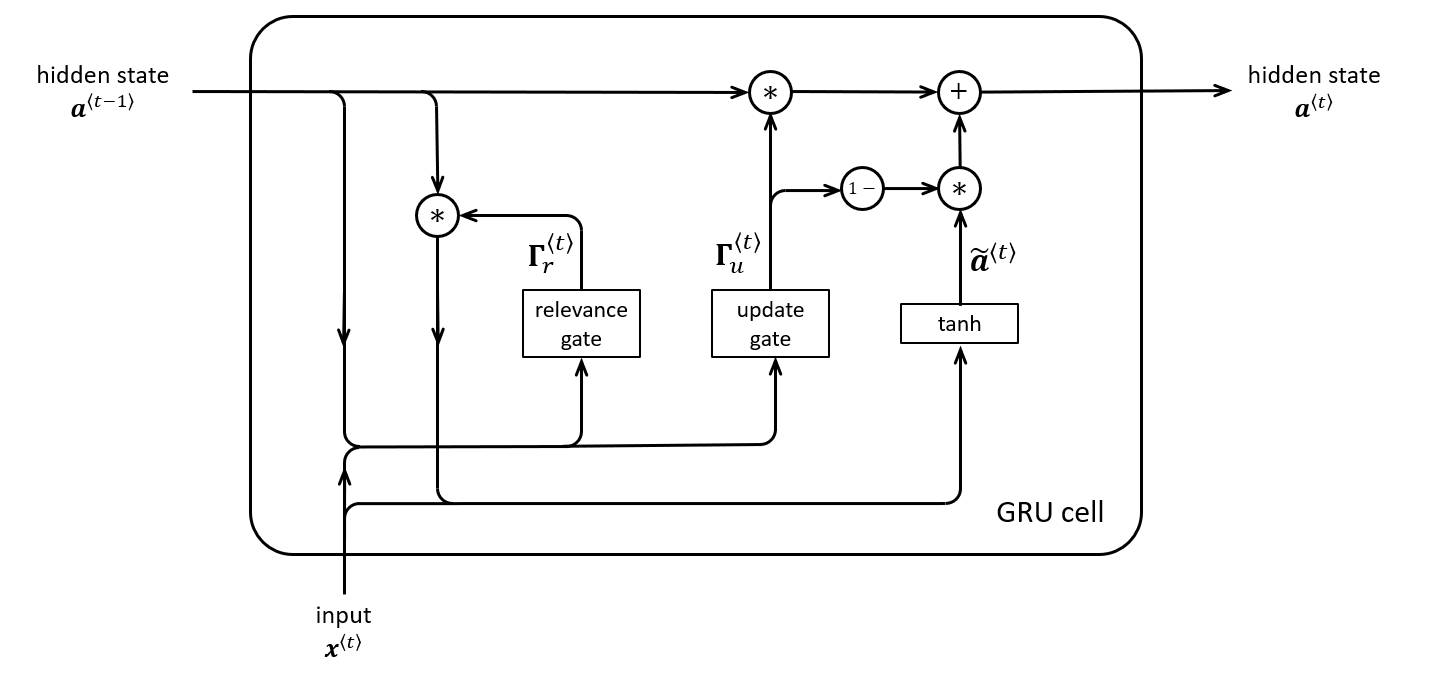

Gated Recurrent Units (GRUs) are another type of RNN architecture designed to solve vanishing gradient problems. The architecture of GRU networks are similar to LSTMs but with fewer gates and states. As simplified alternative to LSTMs, GRUs are usually faster and require less memory but are often comparable in performance.

The key ingredients of a GRU cell include

-

a single update gate \(\mathbf{\Gamma}_u\) that combines the update and forget gates in LSTM

At every time step, we compute a candidate value \(\tilde{\mathbf{a}}^{\langle t \rangle}\) for the new hidden state, but it is up to the gate \(\mathbf{\Gamma}_u\) to decide whether or not to update the hidden state with the candidate.

-

a relevance gate \(\mathbf{\Gamma}_r\) which tells how relative is the state of the memory cell to compute the candidate value for the memory cell

The equations for these operations are:

- Update gate: \(\mathbf{\Gamma}_u^{\langle t \rangle} = \sigma(\mathbf{W}_u[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_u)\)

- Relevance gate: \(\mathbf{\Gamma}_r^{\langle t \rangle} = \sigma(\mathbf{W}_r[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_r)\)

- Candidate hidden state: \(\mathbf{\tilde{a}}^{\langle t \rangle} = \tanh\left( \mathbf{W}_{a} [\mathbf{\Gamma}_r^{\langle t \rangle} * \mathbf{a}^{\langle t - 1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_{a} \right)\)

- Hidden state: \(\mathbf{a}^{\langle t \rangle} = \mathbf{\Gamma}_u^{\langle t \rangle} * \mathbf{a}^{\langle t-1 \rangle} + \left(1 - \mathbf{\Gamma}_{u}^{\langle t \rangle}\right) *\mathbf{\tilde{a}}^{\langle t \rangle}\)

Note that compared to LSTMs, GRUs have no cell states \(\mathbf{c}^{\langle t \rangle}\) but hidden states \(\mathbf{a}^{\langle t \rangle}\) only.

Gated Recurrent Unit (GRU) Networks: Backward Propagation

The equations for backward pass for GRUs are derived analogously to those done for the LSTM.

Again, for convenience, we introduce the following pre-activation variables

\[\begin{align*} \mathbf{z}_u^{\langle t \rangle} &= \mathbf{W}_u[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_u \\ \mathbf{z}_r^{\langle t \rangle} &= \mathbf{W}_r[\mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_r \\ \mathbf{z}_a^{\langle t \rangle} &= \mathbf{W}_a[\mathbf{\Gamma}_r^{\langle t \rangle} * \mathbf{a}^{\langle t-1 \rangle}, \mathbf{x}^{\langle t \rangle}] + \mathbf{b}_a \end{align*}\]Recall that \(\mathbf{a}^{\langle t \rangle} = \mathbf{\Gamma}_u^{\langle t \rangle} * \mathbf{a}^{\langle t-1 \rangle} + \left(1 - \mathbf{\Gamma}_{u}^{\langle t \rangle}\right) *\mathbf{\tilde{a}}^{\langle t \rangle}\), this suggests that the hidden state \(\mathbf{a}^{\langle t \rangle}\) feeds into the loss at the current step and also into the next cell through both \(\mathbf{z}_u^{\langle t+1 \rangle}\), \(\mathbf{z}_r^{\langle t+1 \rangle}\), and \(\mathbf{z}_a^{\langle t+1 \rangle}\). We can write out the full gradient as the sum from two sources:

\[\begin{align*} \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}} &= \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}}\bigg|_{\text{local}} + \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}}\bigg|_{\text{future}} \\ & = \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}}\bigg|_{\text{local}} + \frac{\partial J}{\partial \mathbf{a}^{\langle t+1 \rangle}} \cdot \frac{\partial \mathbf{a}^{\langle t+1 \rangle}}{\partial \mathbf{a}^{\langle t \rangle}} \\ & = \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}}\bigg|_{\text{local}} + d\mathbf{a}^{\langle t+1 \rangle} * \mathbf{\Gamma}_u^{\langle t+1 \rangle} \end{align*}\]In practice the \(d\mathbf{a}^{\langle t \rangle}\) tensor passed back by the next cell is already the full derivative. But the discussion above does matter when we compute what to be passed back to earlier cells. We will cover the computation for \(d\mathbf{a}^{\langle t-1 \rangle}\) at the end of this section.

The derivative of update gate reads:

\[\begin{align*} d\mathbf{z}_u^{\langle t \rangle} & = \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}} \frac{\partial \mathbf{a}^{\langle t \rangle}}{\partial \mathbf{\Gamma}_u^{\langle t \rangle}}\frac{\partial \mathbf{\Gamma}_u^{\langle t \rangle}}{\partial \mathbf{z}_u^{\langle t \rangle}} \\ \Rightarrow d\mathbf{z}_u^{\langle t \rangle} &= d\mathbf{a}^{\langle t \rangle} * \left(\mathbf{a}^{\langle t-1 \rangle} - \mathbf{\tilde{a}}^{\langle t \rangle}\right) * \mathbf{\Gamma}_u^{\langle t \rangle} * \left(1 - \mathbf{\Gamma}_u^{\langle t \rangle}\right) \end{align*}\]For the derivative of candidate value, we can find:

\[\begin{align*} d\mathbf{z}_a^{\langle t \rangle} &= \frac{\partial J}{\partial \mathbf{a}^{\langle t \rangle}} \frac{\partial \mathbf{a}^{\langle t \rangle}}{\partial \mathbf{\tilde{a}}^{\langle t \rangle}} \frac{\partial \mathbf{\tilde{a}}^{\langle t \rangle}}{\partial \mathbf{z}_a^{\langle t \rangle}} \\ \Rightarrow d\mathbf{z}_a^{\langle t \rangle} &= d\mathbf{a}^{\langle t \rangle} * \left(1 - \mathbf{\Gamma}_u^{\langle t \rangle}\right) * \left(1 - \left(\mathbf{\tilde{a}}^{\langle t \rangle}\right)^2\right) \end{align*}\]Lastly, for the relevance gate, since it injects itself into the candidate computation before the weight multiplications, so its gradient path simply passes through \(d\mathbf{z}_a^{\langle t \rangle}\) but not through \(d\mathbf{a}^{\langle t \rangle}\), which makes it the gate derivative without a direct counterpart in the LSTM backward pass. We have:

\[\begin{align*} d\mathbf{z}_r^{\langle t \rangle} &= \frac{\partial J}{\partial \mathbf{z}_a^{\langle t \rangle}} \frac{\partial \mathbf{z}_a^{\langle t \rangle}}{\partial \mathbf{\Gamma}_r^{\langle t \rangle}} \frac{\partial \mathbf{\Gamma}_r^{\langle t \rangle}}{\partial \mathbf{z}_r^{\langle t \rangle}} \\ \Rightarrow d\mathbf{z}_r^{\langle t \rangle} &= d\mathbf{z}_a^{\langle t \rangle} * \mathbf{W}_{a,a} \mathbf{\Gamma}_r^{\langle t \rangle} * \mathbf{a}^{\langle t-1 \rangle} * \mathbf{\Gamma}_r^{\langle t \rangle} * \left(1 - \mathbf{\Gamma}_r^{\langle t \rangle}\right) \end{align*}\]Identical in structure to the LSTM case, we can find parameter derivatives

\[\begin{align*} d\mathbf{W}_* &= d\mathbf{z}_*^{\langle t \rangle} \begin{bmatrix} \mathbf{a}^{\langle t - 1 \rangle} \\ \mathbf{x}^{\langle t \rangle} \end{bmatrix}^T \\ d\mathbf{b}_* &= \sum_\text{batch} d\mathbf{z}_*^{\langle t \rangle} \end{align*}\]where “”\(*\)”” stands for \(u\), \(r\), \(a\) in turn. Note that for \(d\mathbf{W}_a\), the stacked input vector should use \(\mathbf{\Gamma}_r^{\langle t \rangle} * \mathbf{a}^{\langle t-1 \rangle}\) in place of \(\mathbf{a}^{\langle t-1 \rangle}\), reflecting the forward pass definition of \(\mathbf{z}_a^{\langle t \rangle}\).

The derivatives with respect to the previous hidden state \(\mathbf{a}^{\langle t-1 \rangle}\) receives contributions from three local pre-activation gradients, plus a direct path through the update gate term. This reads:

\[d\mathbf{a}^{\langle t-1 \rangle} = \mathbf{W}_{u,a}^T d\mathbf{z}_u^{\langle t \rangle} + \mathbf{W}_{r,a}^T d\mathbf{z}_r^{\langle t \rangle} + \mathbf{W}_{a,a}^T d\mathbf{z}_a^{\langle t \rangle} * \mathbf{\Gamma}_r^{\langle t \rangle} + d\mathbf{a}^{\langle t \rangle} * \mathbf{\Gamma}_u^{\langle t \rangle}\]The last term \(d\mathbf{a}^{\langle t \rangle} * \mathbf{\Gamma}_u^{\langle t \rangle}\) can be understood as the GRU analogue of the LSTM cell-state highway \(d\mathbf{c}_\text{prev}^{\langle t - 1 \rangle} = d \mathbf{c}^{\langle t \rangle} * \mathbf{\Gamma}_f^{\langle t \rangle}\). When \(\mathbf{\Gamma}_u^{\langle t \rangle} \approx 1\), the hidden state is carried forward largely unchanged and this term dominates, propagating gradients back cleanly over long distances. This is the mechanism by which GRUs mitigate the vanishing gradient problem.