Neural Style Transfer (Deep Learning Notes C4W4)

These are some notes for the Coursera Course on Convolutional Neural Networks, which is a part of the Deep Learning Specialization. This post is a summary of the course contents that I learned from Week 4.

These notes are far from original. All credits go to the course instructor Andrew Ng and his team.

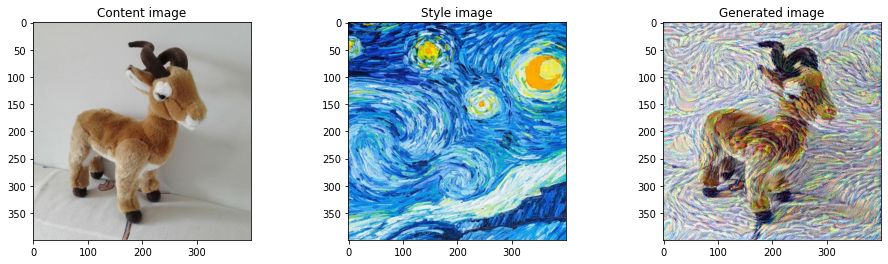

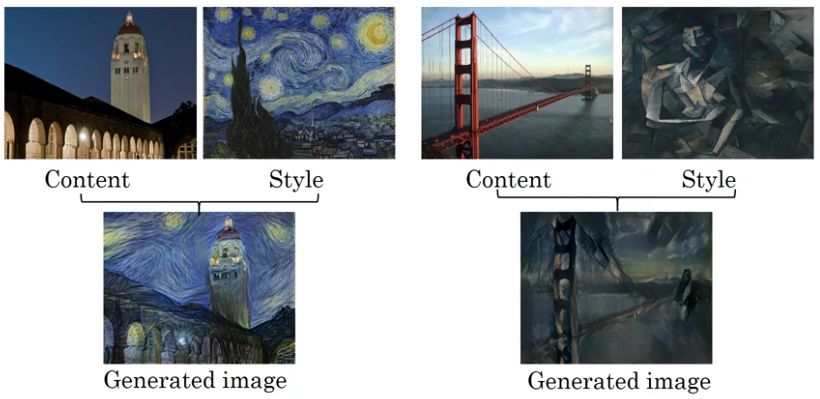

Neural Style Transfer: Overview

The goal of Neural Style Transfer (NST) is to merge two images, a content image (\(C\)) and a style image (\(S\)), to create a generated image (\(G\)) such that the generated image \(G\) combines the content of the image \(C\) with the style of image \(S\).

This is implemented by extracting the content statistics of the content image \(C\) and the style statistics of the style image \(S\) using a deep convolutional network, and then optimizing the output image \(G\) to match these statistics.

Choosing the Layers of the Model

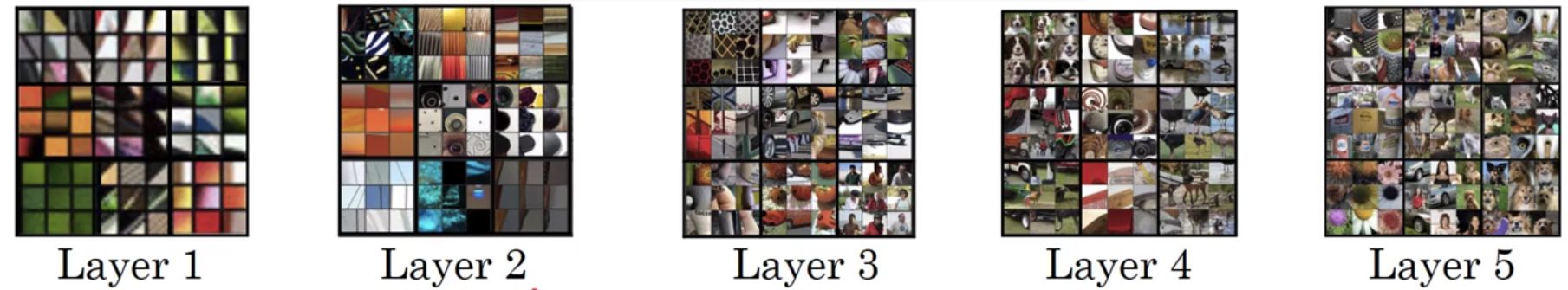

Starting from the network’s input layer, the first few layer activations represent low-level features like edges, corner and simple textures. As we step through the network, the deeper layers detect higher-level features like parts of specific objects and more complex patterns.

Nerual Style Transfer uses intermediate layers of a pre-trained image classification network such as the VGG19 network, so that the content representation contains information about the image’s structures and objects while discarding specific details that are not relevant to the main objective. Choosing intermediate layers allows the NST algorithm to control the balance between preserving the content image’s structure and applying the style image’s aesthetic.

Cost Functions for NST

The cost function that we use for the NST algorithm contains two terms:

- a content cost function \(J_\text{content}(C,G)\) that measures the similiarity between the contents of \(C\) and \(G\)

- a style cost function \(J_\text{style}(S,G)\) that measures the similarity between the styles of \(S\) and \(G\)

Then the total cost is defined as the linear combination of the two

\[J(G) = \alpha J_\text{content}(C,G) + \beta J_\text{style}(S,G)\]Content Cost Function \(J_\text{content}(C,G)\)

The generated image G should match the content of image C. The content cost function can be defined as:

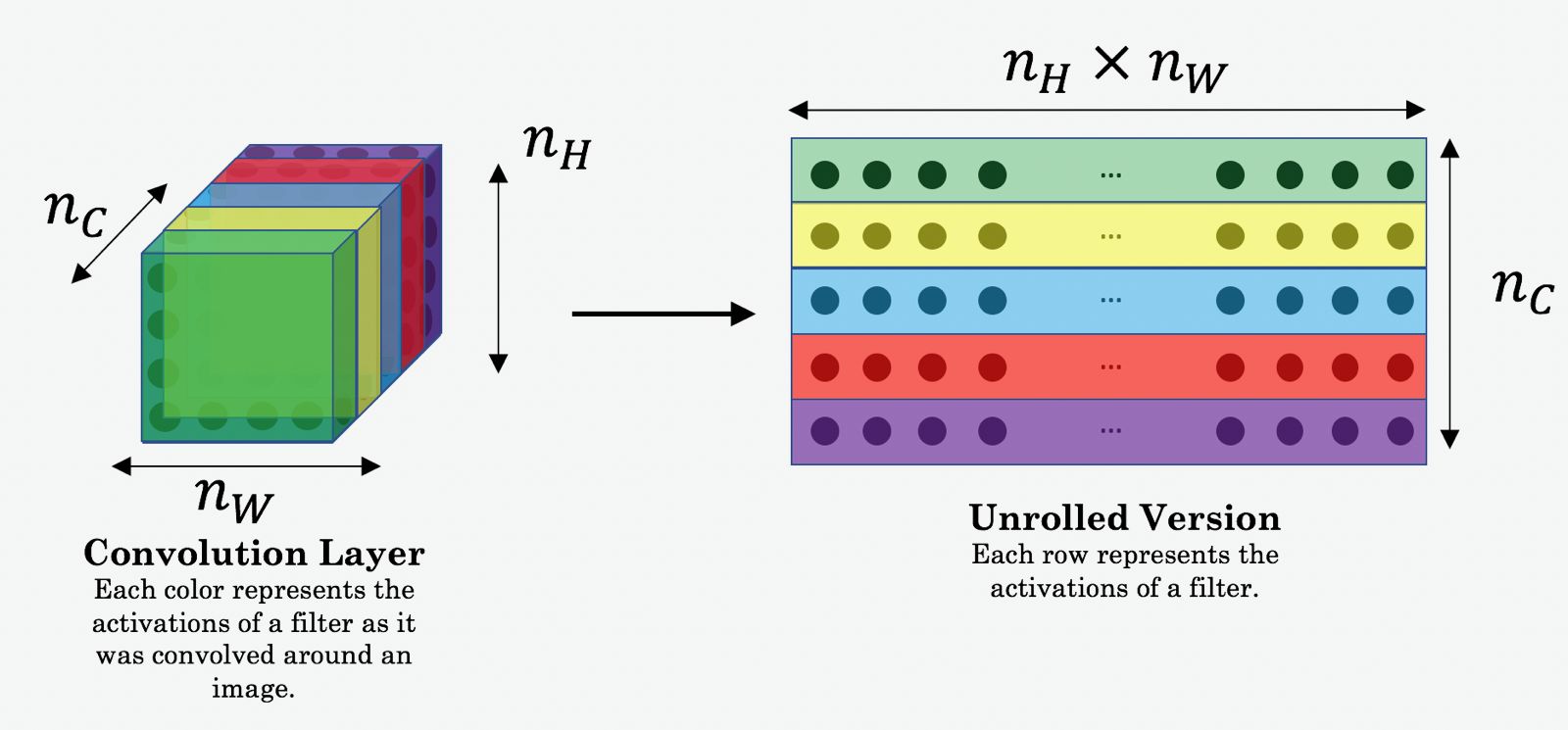

\[J_\text{content}(C,G) = \frac{1}{4 \times n_H \times n_W \times n_C}\sum _{ \text{all entries}} (a^{(C)} - a^{(G)})^2 \tag{1}\]Here, \(n_H, n_W\) and \(n_C\) are the height, width and number of channels of the intermediate hidden layer we have chosen, and appear as a normalization term in the cost. The terms \(a^{(C)}\) and \(a^{(G)}\) are the 3D volumes corresponding to a hidden layer’s activations of the neural network. The content cost then measures how different \(a^{(C)}\) and \(a^{(G)}\) are. When we minimize the content cost later, this will help make sure \(G\) has similar contents as \(C\).

In order to compute the cost \(J_\text{content}(C,G)\), it might also be convenient to unroll these 3D volumes into a 2D matrix, as shown below. Technically this unrolling step is not necessary for computing \(J_\text{content}\), but it will be good practice for when we carry out a similar operation later for computing the style cost \(J_\text{style}\).

Style Matrix

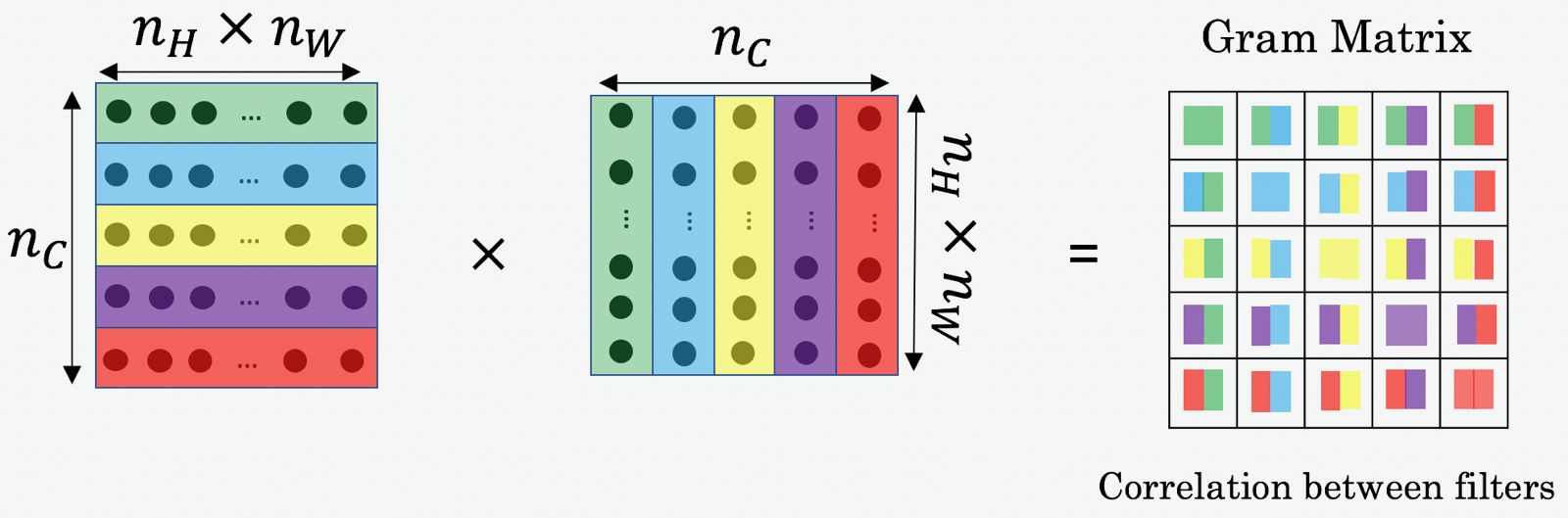

The style of an image can be represented using the Gram matrix of an intermediate layer’s activations.

In linear algebra, the Gram matrix \(\mathbf{G}\) of a set of vectors \((v_{1},\dots ,v_{n})\) is the matrix of dot products, whose entries are

\[\mathbf{G}_{ij} = v_{i}^T v_{j} = \text{np.dot}(v_{i}, v_{j})\]In NST, we compute the Style matrix by multiplying the unrolled filter matrix with its transpose

\[\mathbf{G}_\text{gram} = \mathbf{A}_\text{unrolled} \mathbf{A}_\text{unrolled}^T\]

In components, supopse the activation at row \(i\), column \(j\) and channel \(k\) at a chosen hidden layer is represented by \(a_{i,j,k}\), then the matrix element of \(\mathbf{G}_\text{gram}\) is:

\[\mathbf{G}_{\text{(gram)}{k,k'}} = \sum_{i=1}^{n_H} \sum_{j=1}^{n_W} a_{i,j,k} \,a_{i,j,k'}\]The result will be a matrix of dimension \(n_C \times n_C\) where \(n_C\) is the number of channels of that intermediate layer.

The matrix element \(\mathbf{G}_{k,k'}\) compares how similar the activations from the filter \(k\) is to those activations from the filter \(k'\). If \(a_k\) and \(a_{k'}\) are highly correlated, their dot product should be large and thus we expect the matrix element \(\mathbf{G}_{k,k'}\) to be large.

Also, the diagonal elements \(\mathbf{G}_{k,k}\) measures the level of activity of the filter \(k\). If \(\mathbf{G}_{k,k}\) is large, then this means the image contains a lot of features as represented by the channel \(k\).

By capturing the prevalence of different types of features (\(\mathbf{G}_{k,k}\)) as well as how much different features occur together (\(\mathbf{G}_{k,k'}\)), the matrix \(\mathbf{G}_\text{gram}\) measures the style of the image.

Style Cost

After calculating the Gram matrix, the next goal is to minimize the distance between the Gram matrix of the style image \(S\) and the Gram matrix of the generated image \(G\).

For a single hidden layer, we can compute the Gram matrices of the style image \(S\) and the generated image \(G\), that is \(\mathbf{G}_\text{gram}^{(S)}\) and \(\mathbf{G}_\text{gram}^{(G)}\), using the activations \(a^{[l]}\) from this particular intermediate layer in the network. The corresponding style cost is defined as:

\[J_\text{style}^{[l]}(S,G) = \frac{1}{4 \times {n_C}^2 \times (n_H \times n_W)^2} \sum _{k=1}^{n_C}\sum_{k'=1}^{n_C}(\mathbf{G}^{(S)}_{\text{(gram)}k,k'} - \mathbf{G}^{(G)}_{\text{(gram)}k,k'})^2\tag{2}\]Better results can be obtained if the representation of the style image \(S\) is computed from multiple different layers and combined. The style costs from several different layers can be merged to give:

\[J_\text{style}(S,G) = \sum_{l} \lambda^{[l]} J^{[l]}_\text{style}(S,G)\]where \(\lambda^{[l]}\) are the weights that reflect how much each layer contributes to the style and they are subject to the normalisation condition \(\sum_{l=1}^L\lambda^{[l]} = 1\).

The choice of the coefficients for each layer depends on how much we want the generated image to follow the style image. Since deeper layers capture higher-level concepts, and the features in the deeper layers are less localized in the image relative to each other, so if we want to follow the style image softly, larger weights for deeper layers and smaller weights for the earlier layers can be chosen. In contrast, if we want the generated image to strongly follow the style image, we may choose smaller weights for deeper layers and larger weights for the earlier layers.

Total Cost

The content cost and the style cost functions are combined to give the total cost:

\[J(G) = \alpha J_\text{content}(C,G) + \beta J_\text{style}(S,G)\]Here \(\alpha\) and \(\beta\) are hyperparameters that control the relative weighting between content and style.

By loading the VGG19 model and choosing a suitable optimizer for the total cost, we are able to implement Neural Style Transfer and generate artistic images with lots of fun!