Beam Search & BLEU Score (Deep Learning Notes C5W3)

These are some notes for the Coursera Course on Sequence Models, which is the last part of the Deep Learning Specialization. This post is a summary of the course contents that I learned from Week 3.

These notes are far from original. All credits go to the course instructor Andrew Ng and his team.

Encoder-Decoder Architecture

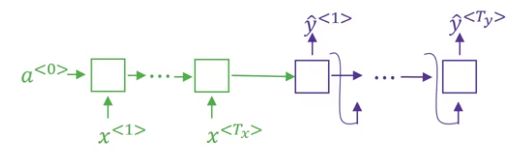

Let’s begin with a machine translation problem, say we want to translate a French sentence \(X\) into an English sentence \(Y\). The architecture for such sequence-to-sequence models typically include an encoder and a decoder.

- The encoder, usually an RNN (LSTM or GRU), takes the input sequence and outputs a vector that represents the whole sequence

- The decoder, also an RNN, takes the vector generated by the encoder and outputs the new sequence

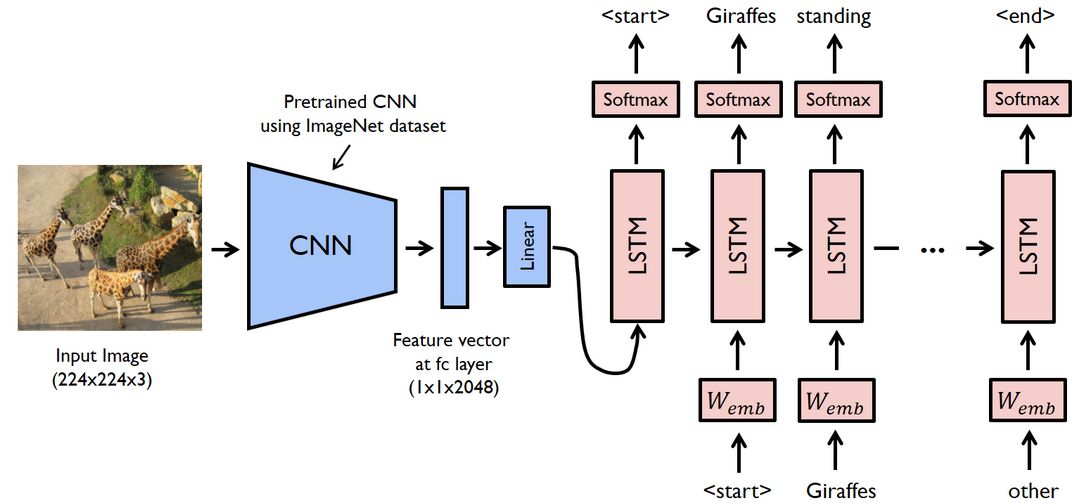

A similar encoder-decoder architecture can also be used for image captioning, where a CNN plays the role as a visual encoder and the decoder is a RNN.

We have introduced a language model that can generate a sequence of words \(\mathbf{y}^{\langle1\rangle}, \cdots, \mathbf{y}^{\langle T_y \rangle}\) based on probability maximisation. The decoder is similar to such language model, but instead of taking a zero vector as the initial hidden state \(\mathbf{a}^{\langle0\rangle}\), the decoder takes the output vector of the encoder, so the output of the decoder is conditioned on the input sequence. Therefore, the probability that we wish to maximise would be different:

- for the language model, our objective is: \(\displaystyle \arg \max_{\mathbf{y}^{\langle1\rangle}, \cdots, \mathbf{y}^{\langle T_y \rangle} } P \left(\mathbf{y}^{\langle1\rangle}, \cdots, \mathbf{y}^{\langle T_y \rangle}\right)\)

- for the machine translation problem, our objective is: \(\displaystyle \arg \max_{\mathbf{y}^{\langle1\rangle}, \cdots, \mathbf{y}^{\langle T_y \rangle} } P \left(\mathbf{y}^{\langle1\rangle}, \cdots, \mathbf{y}^{\langle T_y \rangle} \big\vert \mathbf{x}^{\langle1\rangle}, \cdots, \mathbf{x}^{\langle T_x \rangle} \right)\)

Beam Search

One naïve solution to find the most probable output sequence is by greedy search, where at each time step the word with the highest probability is chosen. But choosing the most probable word at the current time step does not always maximise the conditional probability as a whole, so greedy approach does not result in very good results.

A useful algorithm better than the greedy search is the beam search. We will talk about how beam search works in the following paragraphs.

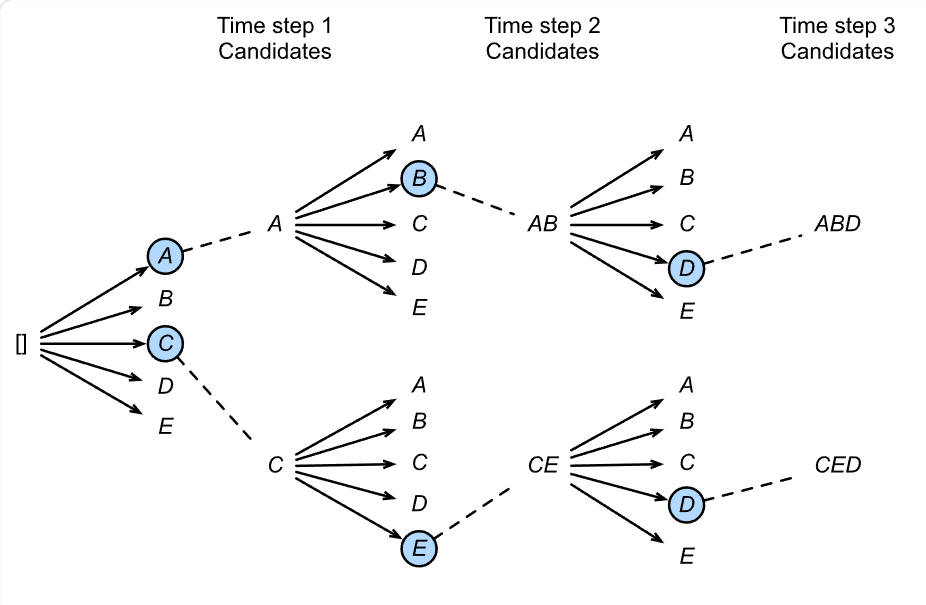

The algorithm has a parameter \(B\) called the beam width, which represents the number of the most probable words that we would keep at each time step.

Taking the machine translation problem as our example, once the decoder receives the output of the encoder, we would obtain a probability distribution over all \(V\) words for the first word of the output sentence where \(V\) is the size of the vocabulary. We then keep the top \(B\) outputs, i.e., the outputs \(\mathbf{y}^{\langle1\rangle (1)}, \cdots, \mathbf{y}^{\langle1\rangle (B)}\) that have the highest probabilities \(P\left(\mathbf{y}^{\langle1\rangle} \big\vert X \right)\) among all \(V\) possible words.

For each of the \(\mathbf{y}^{\langle1\rangle (1)}, \cdots, \mathbf{y}^{\langle1\rangle (B)}\), we use \(B\) copies of the same network to predict the second word \(\mathbf{y}^{\langle2\rangle}\), so we now obtain \(B \times B\) combinations of \((\mathbf{y}^{\langle1\rangle}, \mathbf{y}^{\langle2\rangle})\). We then select the top best \(B\) combinations, i.e., the combinations with the highest probability \(P\left(\mathbf{y}^{\langle2\rangle}, \mathbf{y}^{\langle1\rangle} \big\vert X \right)\). Note that this probability can be calculated using:

\[P\left(\mathbf{y}^{\langle2\rangle}, \mathbf{y}^{\langle1\rangle} \big\vert X \right) = P\left(\mathbf{y}^{\langle1\rangle} \big\vert X \right) \times P\left(\mathbf{y}^{\langle2\rangle} \big \vert X, \mathbf{y}^{\langle1\rangle} \right)\]In the next step, we continue with the \(B\) doublets and then proceed to use \(B\) networks to find the most probable \(B\) triplets of words with the highest \(P\left(\mathbf{y}^{\langle3\rangle}, \mathbf{y}^{\langle2\rangle}, \mathbf{y}^{\langle1\rangle} \big\vert X \right)\), and so on.

With beam search, we keep only \(B\) instances of the network at each time step. We are not guaranteed to find the optimal solution, so it is not an exact search algorithm like BFS (breadth first search) or DFS (depth first search). If we take \(B=1\), the algorithm becomes the greedy search. Larger choices of \(B\) lead to greater chances to obtain better results but at the cost of running time. In practice, it is common to set \(B\approx10\).

Numerical Stability & Length Normalisation

Recall that we are maximising the conditional probability of the form

\[P\left(\mathbf{y}^{\langle t\rangle}, \cdots, \mathbf{y}^{\langle1\rangle} \big\vert X \right) = P\left(\mathbf{y}^{\langle 1\rangle} \big\vert X \right) \times P\left(\mathbf{y}^{\langle2\rangle} \big \vert X, \mathbf{y}^{\langle1\rangle} \right) \times \cdots \times P\left(\mathbf{y}^{\langle t\rangle} \big \vert X, \mathbf{y}^{\langle 1 \rangle}, \cdots \mathbf{y}^{\langle t-1 \rangle} \right)\]which is computed as the product of many probabilities. Since many of those probabilities are very small, so multiplying them can result in numerical overflow. One solution to this problem is to use the sum of the logarithms of the probabilities:

\[\arg \max_{\mathbf{y}^{\langle1\rangle}, \cdots, \mathbf{y}^{\langle T_y \rangle} } \sum_{t=1}^{T_y} \log P\left(\mathbf{y}^{\langle t\rangle} \big \vert X, \mathbf{y}^{\langle 1 \rangle}, \cdots \mathbf{y}^{\langle t-1 \rangle} \right)\]However, this leads to yet another problem that the optimisation function would prefer shorter sequences over longer ones. This is because probabilities are less than one, the more probabilities we multiply (or adding their logarithms, which would take negative values), the lower value we get. This leads to the length normalisation scheme, that is to take a biased average of the logarithms of the probabilities:

\[\arg \max_{\mathbf{y}^{\langle1\rangle}, \cdots, \mathbf{y}^{\langle T_y \rangle} } \frac{1}{T_y^\alpha} \sum_{t=1}^{T_y} \log P\left(\mathbf{y}^{\langle t\rangle} \big \vert X, \mathbf{y}^{\langle 1 \rangle}, \cdots \mathbf{y}^{\langle t-1 \rangle} \right)\]where \(\alpha\) is a heuristic parameter that controls how much we prefer shorter sentences. If \(\alpha = 0\) then there is no length normalisation. If \(\alpha = 1\) then there is full sequence length normalisation. In practice, somewhere in between the two extremes works best. Andrew suggests that taking \(\alpha = 0.7\) is a good empirical choice.

Error Analysis in Beam Search

The error in the model predictions may be due to the beam search algorithm or the RNN model itself, so it is worth spending time on figuring out where things go wrong.

Given a predicted output \(\hat{Y}\) and a possible correct translation \(Y^*\) given by a human:

-

Case 1: \(P \left( Y^* \big\vert X \right) > P \left( \hat{Y} \big\vert X \right)\)

This suggests we did not find the optimal output sequence, so beam search is at fault.

-

Case 2: \(P \left( Y^* \big\vert X \right) < P \left( \hat{Y} \big\vert X \right)\)

This means the model has assigned a higher probability to a worse translation, so RNN model is at fault as its predicted probability distribution is not accurate.

For a complete error analysis, we can take many error examples and find out what fraction of errors are due to beam search or due to RNN model. A deeper error analysis for each part can then be done based on the counts.

BLEU Score

One of the challenges in machine translation is that there could be multiple equally good translations of a given sentence. How we evaluate the accuracy of the system when there are multiple good answers is to use the BLEU score, where BLEU stands for bilingual evaluation understudy. For a given sentence, we collect several reference translations provided by humans. As long as the machine-generated translation is close to any of the references, it gets a high BLEU score.

To begin with, we may evaluate the machine-generated output is to look at each word in the output and check whether it is in any one of the references. This is called the precision. However, this is not a useful measure because the machine can output a list of repeated common words (like “The the the the the the.”) to gain very high precision. So it is better to use a modified precision: for a particular word, we look for the maximum number of occurences of this word in the reference sentences, and clip the count by this maximum number.

But a bunch of matching words does not necessarily mean that the candidate is a good translation, as the words might come out in a random order so that the sentence is not well structured. We can further evaluate the quality of the machine-generated output by looking at the overlap of more than one words at a time, or \(n\)-grams, as they can capture local word order and phrasal coherence. The modified precision of the \(n\)-gram is:

\[p_n = \frac{\sum_{n\text{-gram} \in \hat{Y}} \text{count_clip_of_}n\text{-gram}}{\text{number_of_candidate_}n\text{-grams}}\]Let’s illustrate this with the same example in Andrew’s course.

- Original French sentence \(X\): Le chat est sur la tapis.

- Human reference \(Y1\): The cat is on the mat.

- Human reference \(Y2\): There is a cat on the mat.

- Machine output \(\hat{Y}\): The cat the cat on the mat.

If we look at \(n\)-grams at order 2, i.e., bigrams, we construct a table like this:

| 2-grams | Count | Count clip |

|---|---|---|

| the cat | 2 | 1 (\(Y1\)) |

| cat the | 1 | 0 |

| cat on | 1 | 1 (\(Y2\)) |

| on the | 1 | 1 (\(Y1\) or \(Y2\)) |

| the mat | 1 | 1 (\(Y1\) or \(Y2\)) |

| Total | 6 | 4 |

The precision score for the bigram in this example is therefore: \(\displaystyle p_2 = \frac{4}{6}\).

The combined BLEU score for \(n\)-grams of different sizes up to some maximum order \(N\) (usually up to 4) is:

\[\text{BLEU} = \text{BP} \times \mathrm{e}^{\frac{1}{N} \sum_{n=1}^N \ln p_n}\]The term \(\text{BP}\) is the brevity penalty factor, which deals with the case where a model gains a good precision score by outputting fewer words while missing some bits of information. Let \(c\) be the length of the machine-generated candidate and \(r\) be the length of closest reference translations (some take \(r\) as the average length of reference translations), the BP penalty factor is given by:

\[\text{BP} = \left\{ \begin{array}{ll} 1 & \text{if } c > r \\ \mathrm{e}^{1-\frac{r}{c}} & \text{if } c \leq r \end{array}\right.\]For very short output sentence, \(\frac{r}{c} > 1\), so \(\mathrm{e}^{1-\frac{r}{c}} < 1\), this means short translations are indeed penalised.

BLEU score was proposed by researchers at IBM T. J. Watson Research Center in 2001 so that the evaluation of machine translations can be quicker, cheaper and less human labour. It remains a popular automated and inexpensive metrics in machine translation and has many open source implementations. The known limitations of BLEU is that it does not account for synonyms and fluency, and BLEU has been partially superseded by metrics like METEOR and BERTScore in modern NLP researches.